AI Visibility for B2B SaaS: Why 44% of Brands Are Invisible to Buyers

Umar

73% of B2B buyers now research vendors in ChatGPT before visiting Google. Learn why 44% of SaaS brands are invisible to AI and how to fix it with fresh 2026 data

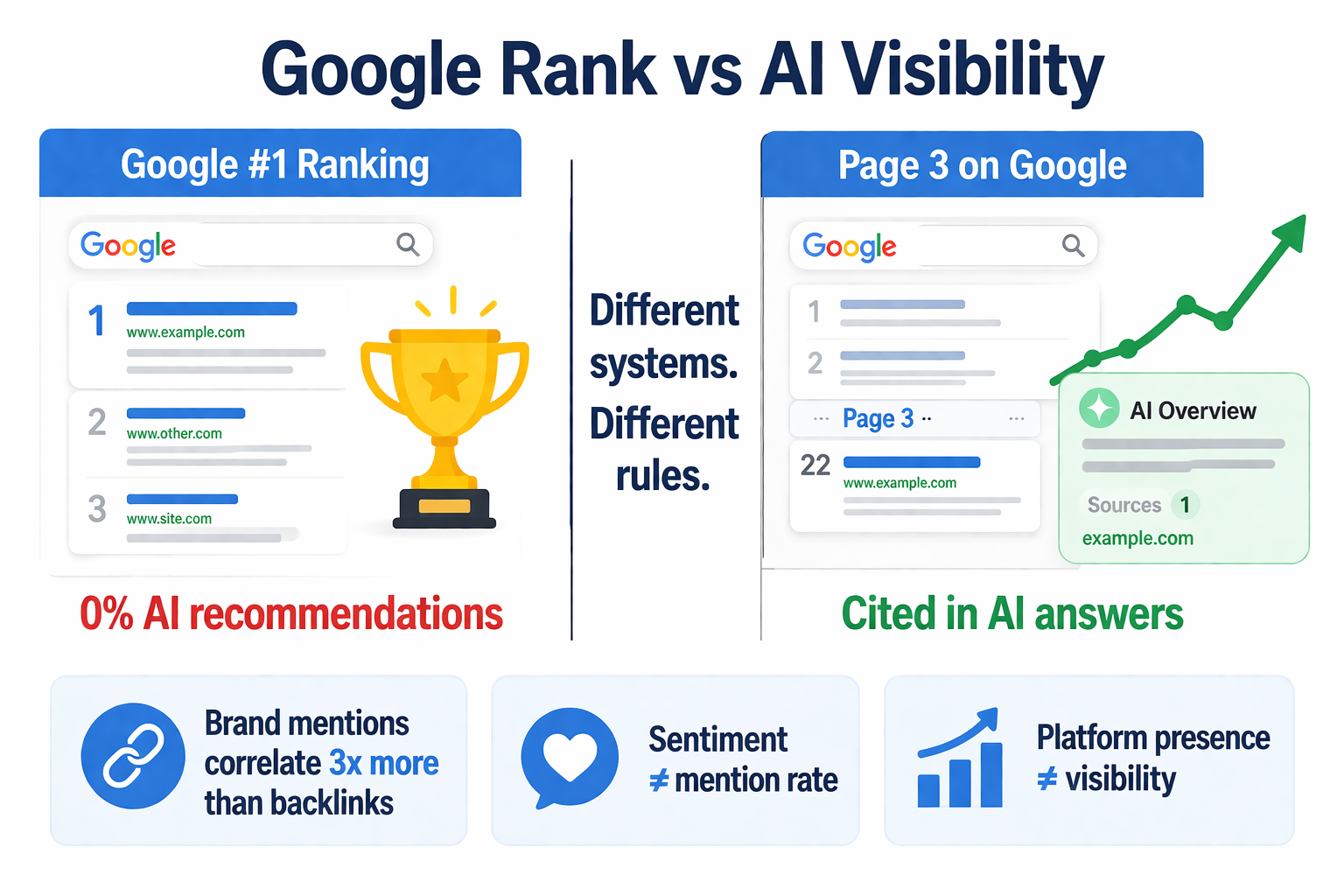

A mid-market SaaS tool ranking #1 on Google for its core keyword appeared in 0% of ChatGPT recommendations for the same category. When a buyer asked "what's the best [category] tool for [use case]", the market leader simply wasn't in the answer. The #1 rank on Google meant nothing to that buyer, because that buyer never opened Google.

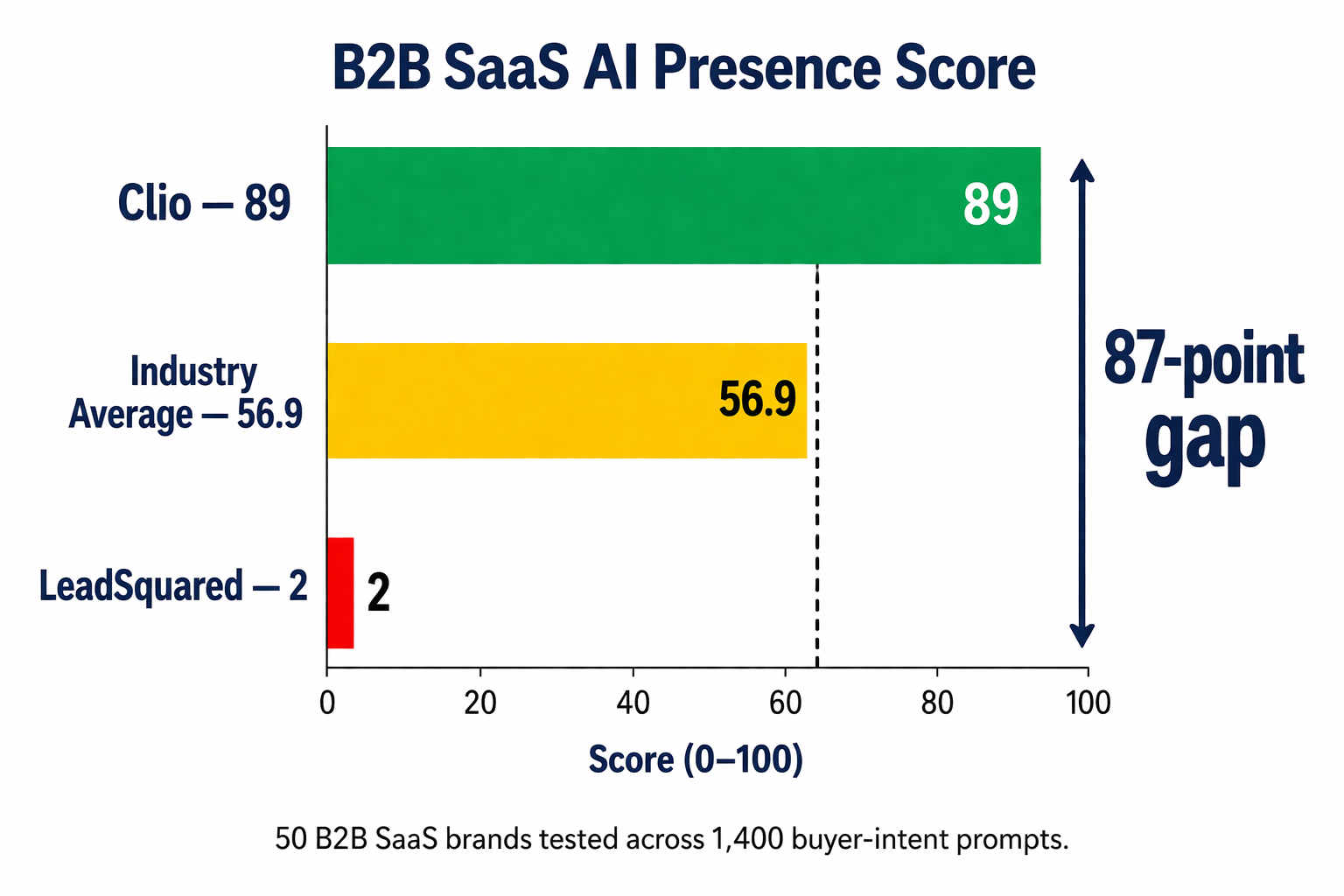

This isn't an isolated case. A benchmark study published by DerivateX on April 5, 2026 analyzed 50 B2B SaaS companies across ChatGPT, Perplexity, Claude, and Gemini using 1,400 buyer-intent prompts. The average AI Presence Score came in at 56.9 out of 100. Forty-four percent of companies scored below 50. The gap between the highest scorer (Clio at 89) and the lowest (LeadSquared at 2) was 87 points, despite both operating in established software categories with active marketing teams.

For B2B SaaS marketers, this is the window of opportunity. Buyer behavior has shifted. Marketing investment hasn't caught up. And the brands that adjust now will operate in a market where most competitors haven't even started.

The Numbers Every B2B SaaS Marketer Should Know

Several independent studies published between January and April 2026 all point to the same conclusion: AI-assisted research is now mainstream for B2B buyers, and traditional marketing measurement has a major blind spot.

73% of B2B buyers now use AI tools in their research process. This figure comes from a March 2026 analysis by Averi covering 680 million citations across ChatGPT, Claude, and Google AI. G2's survey of 1,000+ software buyers found an even higher number: 87% say AI chatbots are changing how they research software. Half of buyers now start their journey in an AI chatbot instead of Google, and that figure jumped 71% compared to a survey G2 ran just four months earlier.

47% of enterprise buyers start vendor research with AI assistants instead of Google. A December 2025 Treble survey of 300 CIOs, CISOs, and CTOs confirmed this. Once buyers enter evaluation mode, 93% use AI to summarize and compare vendors. That's not early-stage experimentation. That's the overwhelming majority making AI core to the evaluation process.

AI search traffic converts at 14.2% compared to Google organic's 2.8%. The 5.1x conversion premium is the metric that matters for revenue-focused marketing leaders. Fewer AI-referred visitors produce disproportionately more pipeline, because the buyers who click through from an AI recommendation are already at the consideration or decision stage. AI-referred traffic has up to 4.4x the value of traditional organic, according to Semrush.

Only 22% of marketers track AI visibility. Despite 73% buyer adoption, marketing teams haven't caught up. Fewer than 26% plan to develop content specifically for AI citations. 64% are unsure how to measure AI search success. This is the gap that creates the competitive opportunity.

72% of marketing leaders expect AI to surpass SEO as the primary visibility channel within three years. But fewer than one in four have implemented any measurement. The teams that start measuring now will have 12-18 months of data and optimization experience before their competitors begin.

Why Ranking on Google Doesn't Predict AI Visibility

The uncomfortable reality for most SEO-focused SaaS teams: the playbook that built your Google rankings doesn't translate to ChatGPT recommendations. Brands that rank #1 for their category keywords on Google appear in 0% of AI chatbot recommendations for equivalent buyer queries. And smaller brands with great content structure show up in AI results despite being on page 3 of Google.

There are structural reasons for this disconnect. Google ranks pages based on backlinks, domain authority, and keyword relevance, then shows links for users to evaluate. AI synthesizes information from multiple sources and produces a single direct answer. The selection criteria are different.

The data from the DerivateX benchmark makes this visible in a way few teams have quantified before. Consider three patterns from the study:

Platform presence doesn't equal visibility. Make is present on all four platforms (ChatGPT, Perplexity, Claude, Gemini) and scored 40. Zapier is absent from Claude entirely and scored 63. The difference is how often and how prominently each brand appears when AI systems are asked category questions by actual buyers. Being crawlable by the AI isn't the same as being recommended.

Positive sentiment doesn't save low mention rates. Ten of the 50 companies scored a perfect 20 out of 20 on sentiment (Close, WebEngage, Kissflow, CleverTap, Freshworks, Razorpay, BrightEdge, Mindbody, Mangools, and Toast) but had mention rates of 8 out of 30 or lower. When AI mentions these brands, the framing is uniformly positive. The gap is frequency, not perception. That's a distribution problem, not a brand problem.

Brand web mentions matter 3x more than backlinks. The 2026 Loganix analysis found that brand web mentions show a Spearman correlation of 0.664 with AI citation rates. Backlinks correlate at roughly 0.2. For B2B SaaS teams that invested heavily in link building, this is a fundamental shift: unlinked brand mentions in the right publications now drive AI visibility more than linked ones drive Google rankings.

The B2B SaaS Buyer Journey in 2026

To understand how to fix the visibility gap, you need to see what the buyer journey actually looks like now. According to Forrester's 2025 survey of 4,000+ buyers, 61% of the B2B buying journey completes before the buyer contacts a vendor. That figure increases when AI tools provide synthesized comparisons that previously required multiple site visits.

The typical path now looks like this:

Stage 1. Category discovery. The buyer types a natural-language question into ChatGPT: "What's the best customer support platform for a mid-size SaaS company with a small team?" The AI returns a shortlist of 3-5 recommendations with explanations. The buyer never sees a ranked list of 10 blue links. Whoever isn't in that shortlist simply doesn't exist for that buyer at this stage.

Stage 2. Comparison. The buyer asks follow-up questions: "How does [Brand A] compare to [Brand B]?" The AI synthesizes from review sites, comparison articles, Reddit threads, and product documentation. If your differentiation content isn't structured for extraction, the AI will use whatever third-party source gets cited most.

Stage 3. Validation. The buyer cross-references on Perplexity, Claude, or Google AI Mode. Each platform pulls from different sources with different weights. This is where cross-platform consistency becomes critical. A brand that only shows up on ChatGPT but is absent from Perplexity loses credibility.

Stage 4. Shortlist and demo. Only now does the buyer visit vendor websites. By this point, they've already formed strong preferences based on AI-synthesized research. If you're not on the shortlist, you don't get the demo request. This ties directly to the problem covered in RepuAI's guide on why your brand doesn't appear in ChatGPT.

The implication is straightforward: AI visibility is where B2B SaaS deals are being won or lost before your sales team ever gets a lead. Traditional SEO matters for other stages, but this pre-demo stage now lives almost entirely inside AI tools.

A Practical Framework for B2B SaaS AI Visibility

Based on the patterns the benchmark study revealed, here's what actually moves the needle:

1. Audit where you stand across all four platforms. Run 20-30 buyer-intent prompts across ChatGPT, Perplexity, Claude, and Gemini. Use real queries your ICP would type: "best [category] for [use case]", "[your brand] vs [competitor]", "top [category] tools for [company size]". Log whether you're mentioned, in what position, and with what framing. This is your baseline. The 8 metrics for measuring AI visibility framework covers the specific KPIs to track.

2. Build comparison and category content. Listicle-format content ("Top N" comparisons and rankings) accounts for nearly 60% of all cited URLs in AI search. Product pages account for only 8.5%. If your content strategy centers on your product pages, you're structurally disadvantaged. Publish comparison articles that explicitly name competitors, "best of" roundups for your category, and decision frameworks buyers can extract and quote.

3. Earn third-party mentions on sources AI actually cites. 85% of AI brand mentions come from third-party pages. For B2B SaaS specifically, the key sources are G2, Capterra, Gartner Peer Insights, industry-specific review sites, Reddit discussions in relevant subreddits, and listicles on publications like TechCrunch, SaaStr, and vertical trade pubs. Don't chase any press. Identify which publications AI references when discussing your category (ask ChatGPT and note the sources it cites), then pursue mentions specifically from those.

4. Strengthen entity signals with structured data. Organization schema with sameAs links to LinkedIn, Crunchbase, and Wikipedia (if applicable) helps AI engines confirm your brand as a verified entity. Article schema with accurate dateModified tells AI your content is current. The schema markup guide covers which properties matter most and how to audit what you have.

5. Create extractable answer blocks. The first 60 words after each H2 heading should be a complete, standalone answer that an AI could quote directly. Write as if the AI might pull that paragraph into its response. Follow with supporting evidence: statistics, research citations, customer quotes. This is different from traditional SEO copywriting and requires content restructuring, not just optimization. RepuAI's GEO guide breaks down the specific content patterns.

6. Monitor continuously, not quarterly. AI responses change week to week as models access new content and retrain. What ChatGPT says about your brand this week may differ next month. RepuAI tracks your brand's presence across ChatGPT, Perplexity, Gemini, and Claude automatically, so you catch sentiment shifts and citation changes before they affect pipeline. The free AI Visibility Checker gives you a quick baseline to start with.

What Separates Visible B2B SaaS Brands from Invisible Ones

Looking at the DerivateX benchmark and the buyer behavior data together, the visible brands share a few traits that the invisible ones don't:

They treat AI visibility as a measurable KPI, not a side project. The brands at the top of the leaderboard have weekly tracking in place. They know their baseline, monitor changes, and tie interventions to measurable results. For most, this happens alongside SEO measurement rather than replacing it.

They publish comparison content that names competitors. This feels counterintuitive for marketing teams used to "don't mention the competition." But AI models heavily weight head-to-head comparison pages when deciding which brands to recommend for a category. Avoiding competitor names cedes the comparison narrative to whoever does name them (often the competitor itself).

They earn mentions in publications AI already trusts. The visible brands invest in earning citations from a narrow list of publications. PR budgets get reallocated from "broad coverage" to "specific publications AI references for our category."

They maintain cross-platform consistency. The brands that show up strongly on one platform but weakly on others are more fragile than they look. Buyer AI tool preferences vary, and platform market share shifts. A high AVS requires consistent citation across ChatGPT, Perplexity, Claude, and Gemini. The differences in how each AI engine cites brands mean you need platform-specific awareness.

They fix hallucinations and negative mentions quickly. When AI gets their pricing wrong or confuses them with a competitor, they have a process to find and fix those errors rather than letting bad information calcify in the model's understanding of their brand.

Start This Week

The pattern across every data point in this article is the same: AI visibility is measurable, the gap is larger than most teams realize, and the brands that start now will have a sustained advantage as AI becomes the default entry point for B2B vendor research.

Here's what to do this week. Run 20 buyer-intent prompts across ChatGPT, Perplexity, Claude, and Gemini for your category. Record whether your brand appears, in what position, and with what framing. Compare your presence to 3-5 direct competitors using the same prompts. The gap you'll find is your current opportunity.

Then pick the single highest-impact fix based on what the audit reveals. If your sentiment is good but mention rate is low, the problem is distribution: invest in earning mentions on the sources AI cites for your category. If your mention rate is reasonable but sentiment is mixed, the problem is messaging: update your website and third-party content for clarity and accuracy. If you're invisible across all platforms, the problem is foundational: start with structured data, extractable answer blocks, and comparison content.

B2B buyers have already moved. 73% are using AI in their research. The question isn't whether to adapt your marketing strategy. It's how quickly you can start measuring, and how much ground your competitors will cover before you do.