How to Measure AI Visibility: Metrics, KPIs, and Reporting

Umar

Learn the 8 essential metrics for measuring AI visibility in 2026. From mention rate to AI Share of Voice, build a KPI framework that proves business impact.

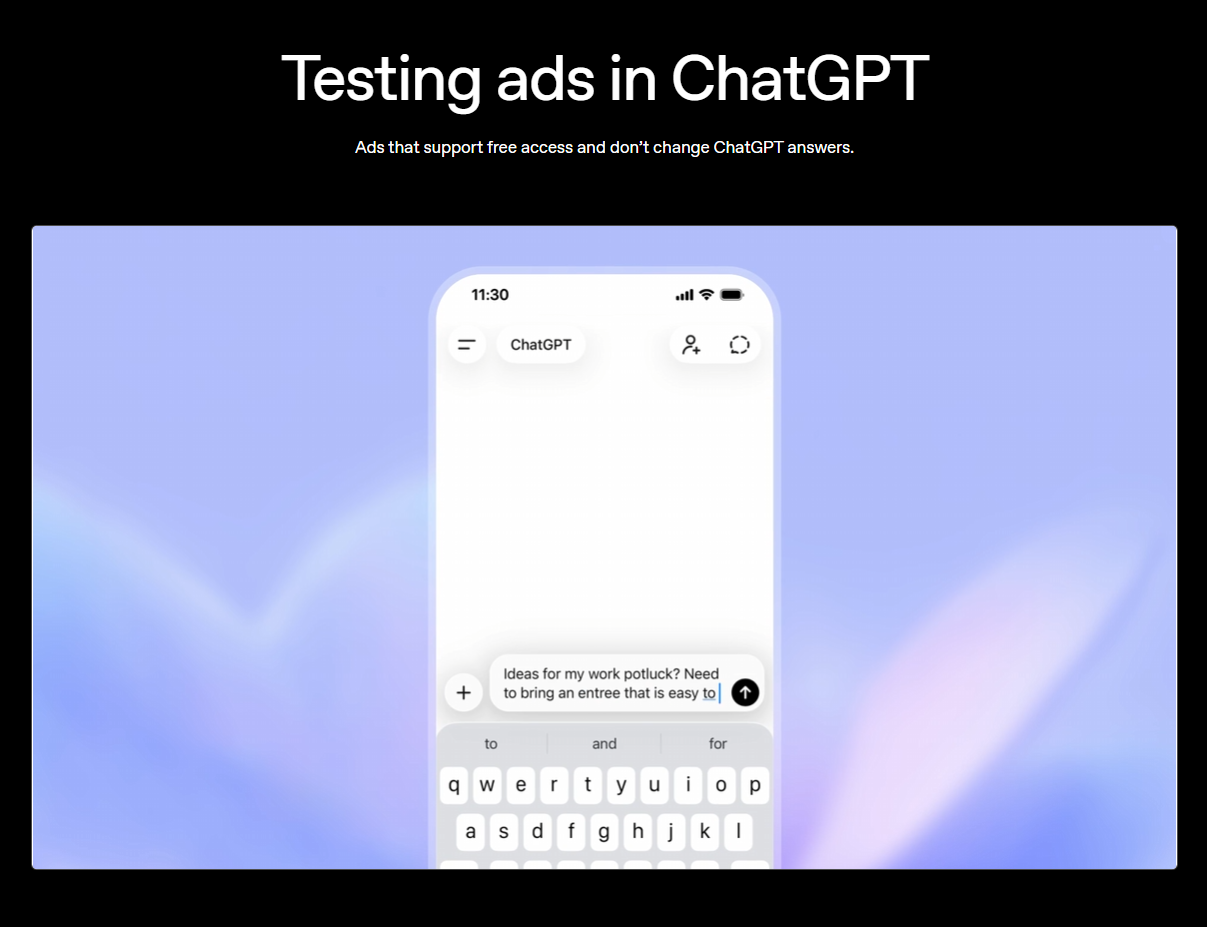

Your brand ranks on page one of Google. Your SEO dashboard shows healthy traffic. Everything looks fine. Except 38% of your potential customers now start product research in ChatGPT, not Google. And you have zero data on whether AI systems mention your brand, recommend competitors instead, or describe your product with outdated information.

That's the measurement gap most marketing teams are facing right now. Traditional analytics tools weren't built for a world where influence happens inside AI-generated answers, often without a single click to track.

Why Traditional Metrics Don't Tell the Full Story

Google Analytics tracks clicks. Search Console tracks impressions and rankings. Neither captures what happens when a user asks ChatGPT "what's the best project management tool for remote teams?" and your competitor gets recommended while your brand doesn't appear at all.

The core problem: AI visibility operates before the click. Research from Peec AI shows that 85% of consumers who start searches with AI still cross-reference through traditional search before converting. But most attribution models only capture the second step. The AI recommendation that shaped the decision gets zero credit in your analytics.

This creates a dangerous blind spot. Your branded search volume could be declining not because of SEO issues, but because AI systems stopped mentioning your brand three months ago. Without AI-specific measurement, you'd never connect those dots.

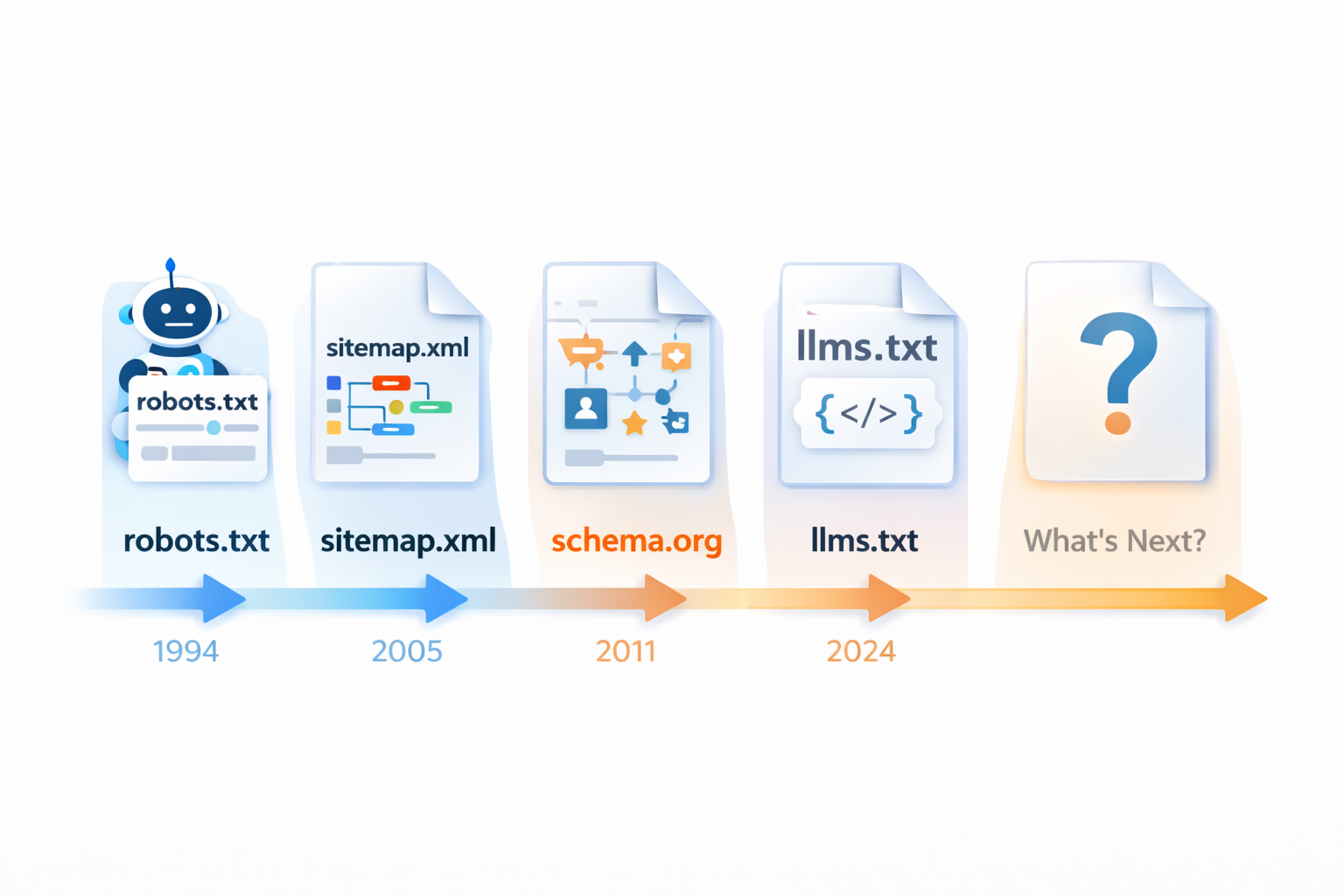

Here's what makes AI visibility measurement fundamentally different from SEO metrics:

AI answers are probabilistic, not deterministic. Ask the same question twice and you might get different brands mentioned. AirOps research found that only 30% of brands stay visible from one AI answer to the next, and just 20% remain consistent across five consecutive runs.

There's no fixed "position." In traditional search, you're rank 3 or rank 7. In AI-generated responses, your brand might be mentioned first, last, embedded in a comparison, or absent entirely. The concept of ranking translates loosely at best.

Influence happens without clicks. When ChatGPT recommends your competitor, the user might type that brand name directly into Google afterward. Your competitor gets a "branded search" win. The AI engine gets zero attribution. This makes AI-to-branded-search correlation one of the most important signals to track.

The 8 Metrics That Actually Matter

After analyzing frameworks from Peec AI, Meltwater, Backstage SEO, and Similarweb, plus input from practitioners at Graph Digital and LLM Pulse, here are the eight metrics that form a complete AI visibility measurement system. They're organized by the question each one answers.

Tier 1: Are You in the Room?

1. Brand Mention Rate

What it measures: The percentage of relevant AI-generated responses that include your brand name.

Why it matters: This is the most fundamental metric. If AI systems don't mention you, nothing else matters. It's the equivalent of "indexed or not" in traditional SEO, but for generative engines.

How to calculate: (Number of prompts where your brand appears / Total relevant prompts monitored) x 100

Benchmark: Brands with strong AI visibility typically achieve 60%+ mention rates for their core category prompts. Similarweb's 2026 AI Brand Visibility Index shows that top brands in competitive categories like electronics or finance can reach 80%+, while the tenth-ranked brand in the same category might sit at 10-15%.

What to watch for: Track this across different AI platforms separately. Your mention rate in ChatGPT might be 45% while Perplexity shows 70%, because each model sources and weights information differently.

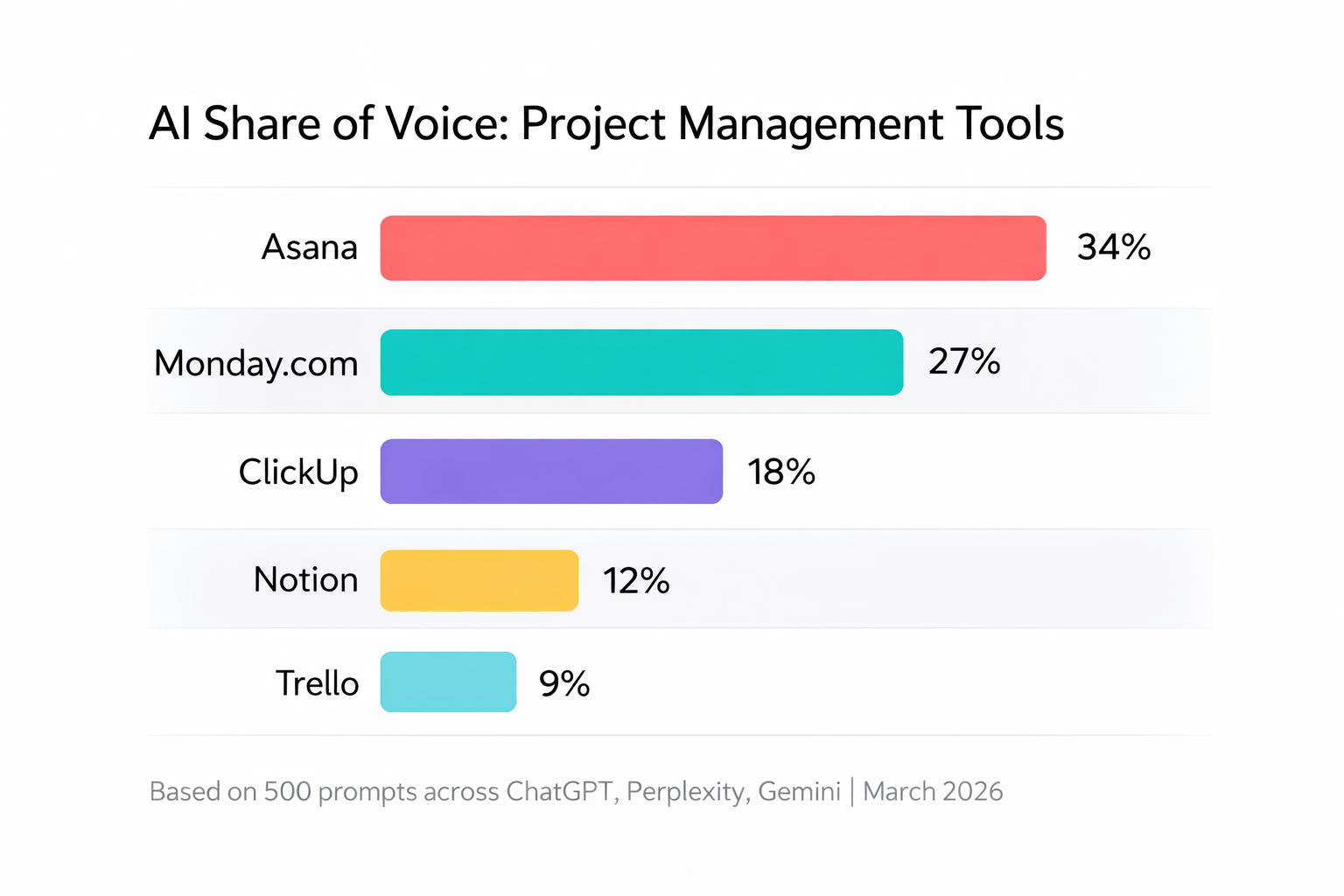

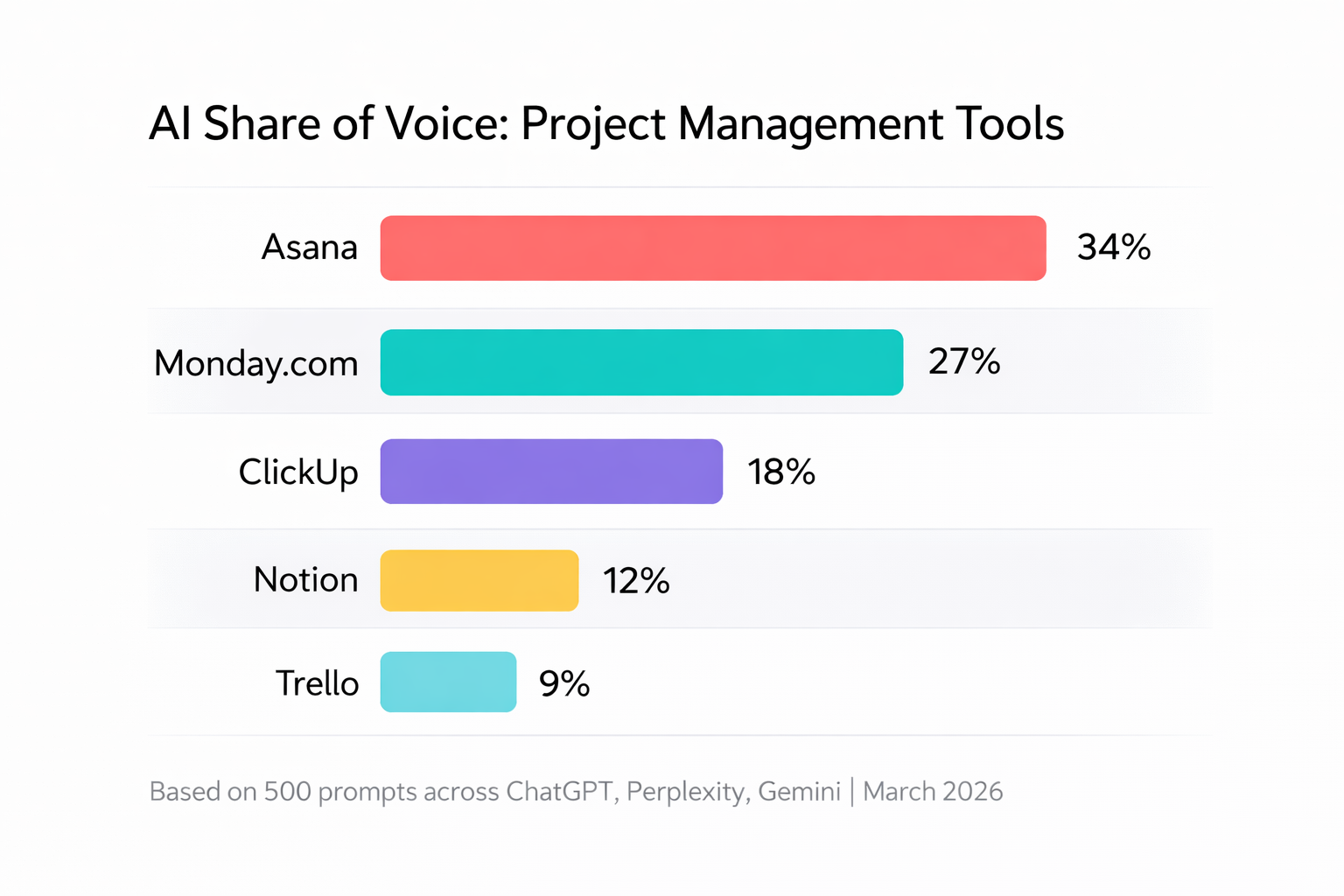

2. AI Share of Voice (AI SoV)

What it measures: Your brand's share of mentions relative to competitors across a defined set of AI prompts.

Why it matters: Mention rate tells you if you're in the conversation. AI SoV tells you how much of the conversation you own versus competitors. This is the closest equivalent to traditional "market share" in AI search.

How to calculate: (Your brand mentions / Total brand mentions for all tracked competitors) x 100

Benchmark: Category leaders typically hold 25-40% AI SoV. A 94-point spread between first and tenth in competitive categories like electronics tells you the space is effectively owned by one brand. A 56-point spread in categories like travel suggests genuine competition.

Tier 2: How Are You Positioned?

3. Citation Rate

What it measures: The percentage of AI responses that include a direct link to your content as a source.

Why it matters: Being mentioned is good. Being cited with a link is better. Citation means the AI model has identified your content as authoritative enough to reference. Not all platforms show links (ChatGPT often doesn't), but Perplexity and Google AI Overviews consistently do.

How to calculate: (Responses citing your URL / Total responses mentioning your brand) x 100

Important distinction: Mentions and citations are different metrics. Your brand can be mentioned without being cited (the AI knows your name from training data but doesn't link to your content). Tracking both reveals whether your brand has name recognition, content authority, or both.

4. Average Position in AI Responses

What it measures: Where your brand typically appears within multi-brand AI answers (first mentioned, second, fifth, etc.).

Why it matters: Being mentioned third in a list of ten recommendations carries less weight than being mentioned first with detailed context. Position correlates with user attention, though less rigidly than traditional search rankings.

Caveat: This metric is less stable than search rankings. AI responses vary significantly between runs, so track rolling averages over weeks, not individual snapshots.

5. Sentiment Score

What it measures: Whether AI systems describe your brand positively, neutrally, or negatively when you're mentioned.

Why it matters: Visibility without positive framing can actually hurt you. If ChatGPT mentions your brand but adds "however, users frequently report customer service issues," that's worse than not appearing at all.

This is one of the most undervalued metrics and one of the most actionable. Peec AI documented a case where Revolut's AI presence was being shaped by Sitejabber, a review site where 75% of negative reviews came from single-review accounts. Identifying the source of negative sentiment is the first step to fixing it.

How to track: Most AI visibility tools offer sentiment analysis. If tracking manually, categorize each mention as positive, neutral, or negative, and note the specific claims being made.

Tier 3: What's the Business Impact?

6. AI Referral Traffic

What it measures: Visits to your website that originate directly from AI platforms.

Why it matters: This is your most direct signal that AI recommendations are driving actual visits. GA4 and Adobe Analytics categorize visits from ChatGPT, Claude, and Perplexity as referral traffic. You can isolate and track these specifically.

Reality check: AI referral volume is still small for most sites (often under 1% of total traffic). But the quality is measurably higher. Similarweb data shows users referred from ChatGPT spend an average of 15 minutes on site versus 8 from Google, generate 12 pageviews versus 9, and convert at 7% versus 5%.

How to track in GA4: Go to Reports > Acquisition > Traffic Acquisition. Filter by Session Source and look for chatgpt.com, perplexity.ai, gemini.google.com, and claude.ai.

7. Branded Search Correlation

What it measures: Whether changes in AI visibility correlate with changes in branded search volume over time.

Why it matters: When AI tools mention your brand more often, people tend to search for you by name afterward. This is the "invisible bridge" between AI visibility and traditional metrics. A rise in branded search volume that correlates with improved AI mention rates is strong evidence that AI visibility is driving downstream demand.

How to track: Plot your AI mention rate trend alongside Google Search Console branded query volume on the same timeline. Look for 2-4 week lag patterns.

8. Competitive Displacement Rate

What it measures: How often a competitor appears in AI responses where your brand previously appeared (or should appear based on relevance).

Why it matters: This is your early warning system. If a competitor starts showing up in prompts where you used to dominate, something changed in the AI's source data. Maybe they published a comparison article that's getting cited. Maybe a negative review surfaced. This metric lets you catch and respond to shifts before they affect pipeline.

Building Your Reporting Cadence

Not all metrics need the same tracking frequency. Here's a practical cadence:

Weekly (quick health check):

- Brand mention rate across core prompts

- Any new negative sentiment flags

- AI referral traffic volume in GA4

Monthly (strategic review):

- AI Share of Voice vs competitors

- Citation rate trends by platform

- Branded search correlation analysis

- Sentiment trend direction

- Competitive displacement alerts

Quarterly (executive reporting):

- AI Visibility Index (composite score)

- AI referral traffic quality vs other channels

- Pipeline influence estimates

- Content performance: which pages get cited most

- Strategic recommendations for next quarter

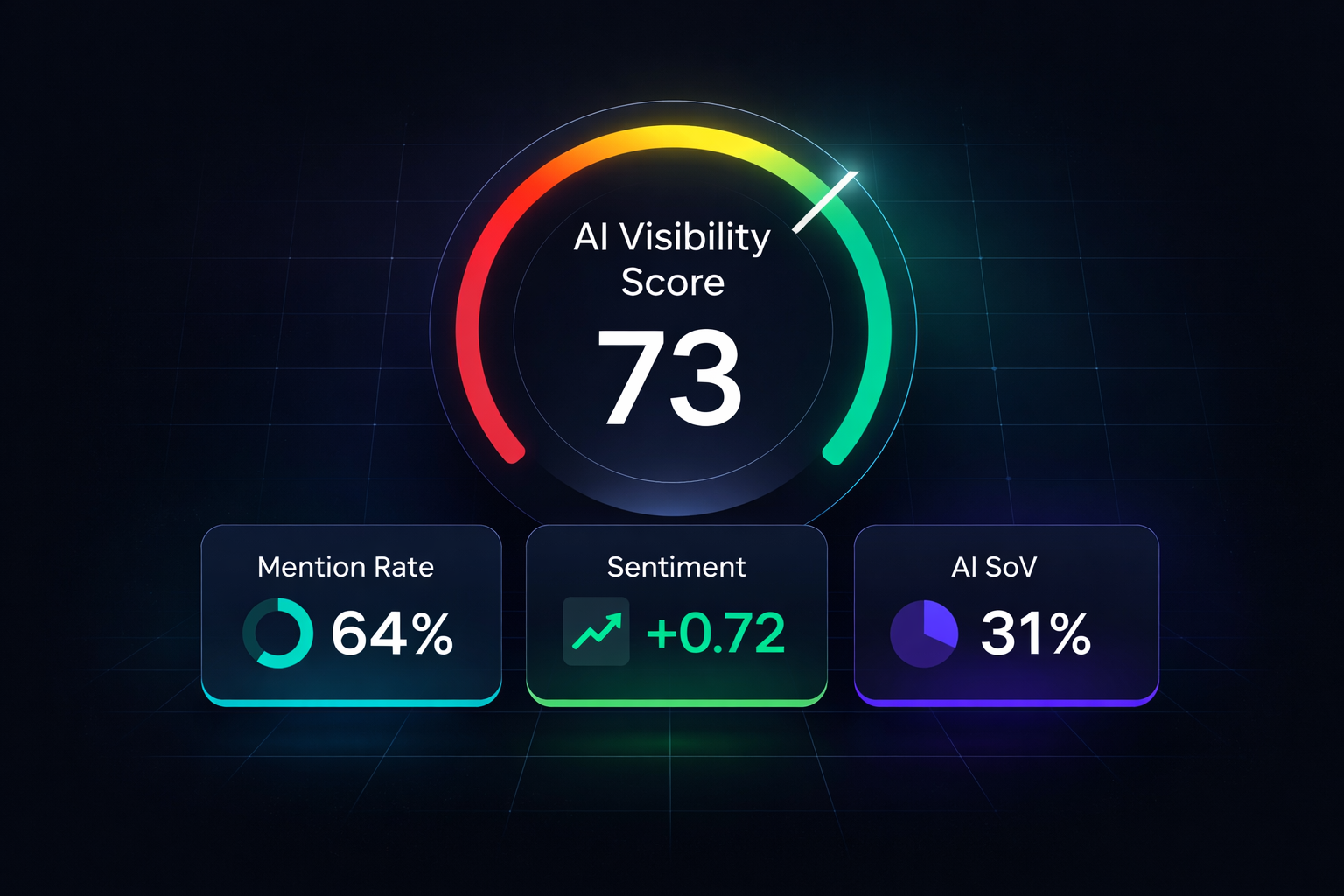

For executive reporting, consider building a composite AI Visibility Index. Normalize each metric to a 0-100 scale, assign weights based on your business priorities (mention rate and sentiment often carry the most weight), and calculate a single number that trends over time. This gives leadership a clear, trackable KPI without drowning them in eight separate metrics.

The Attribution Problem (and How to Work Around It)

Let's be honest: clean attribution from AI visibility to revenue doesn't exist yet. The journey is too fragmented. A user might see your brand in a ChatGPT answer, search your name on Google two weeks later, visit your site from an organic listing, and convert through a retargeting ad. Four touchpoints, and the AI one gets zero credit.

But "hard to attribute" doesn't mean "impossible to measure." Here are three practical workarounds:

Correlation analysis. Track AI mention rate and branded search volume on the same timeline. If they move together with a 2-4 week lag, you have evidence of causation even without click-level proof.

Cohort comparison. Compare conversion rates and sales cycle length for leads who arrived during periods of high AI visibility versus low AI visibility. Graph Digital reports that B2B buyers who encounter a brand in AI-powered research demonstrate 18-25% shorter sales cycles.

Direct survey. Add "How did you first hear about us?" to your lead intake. Include "AI assistant (ChatGPT, Perplexity, etc.)" as an option. This simple addition provides first-party attribution data.

Setting Up AI Visibility Monitoring

You can start tracking these metrics today with three approaches, ranging from free to full-featured:

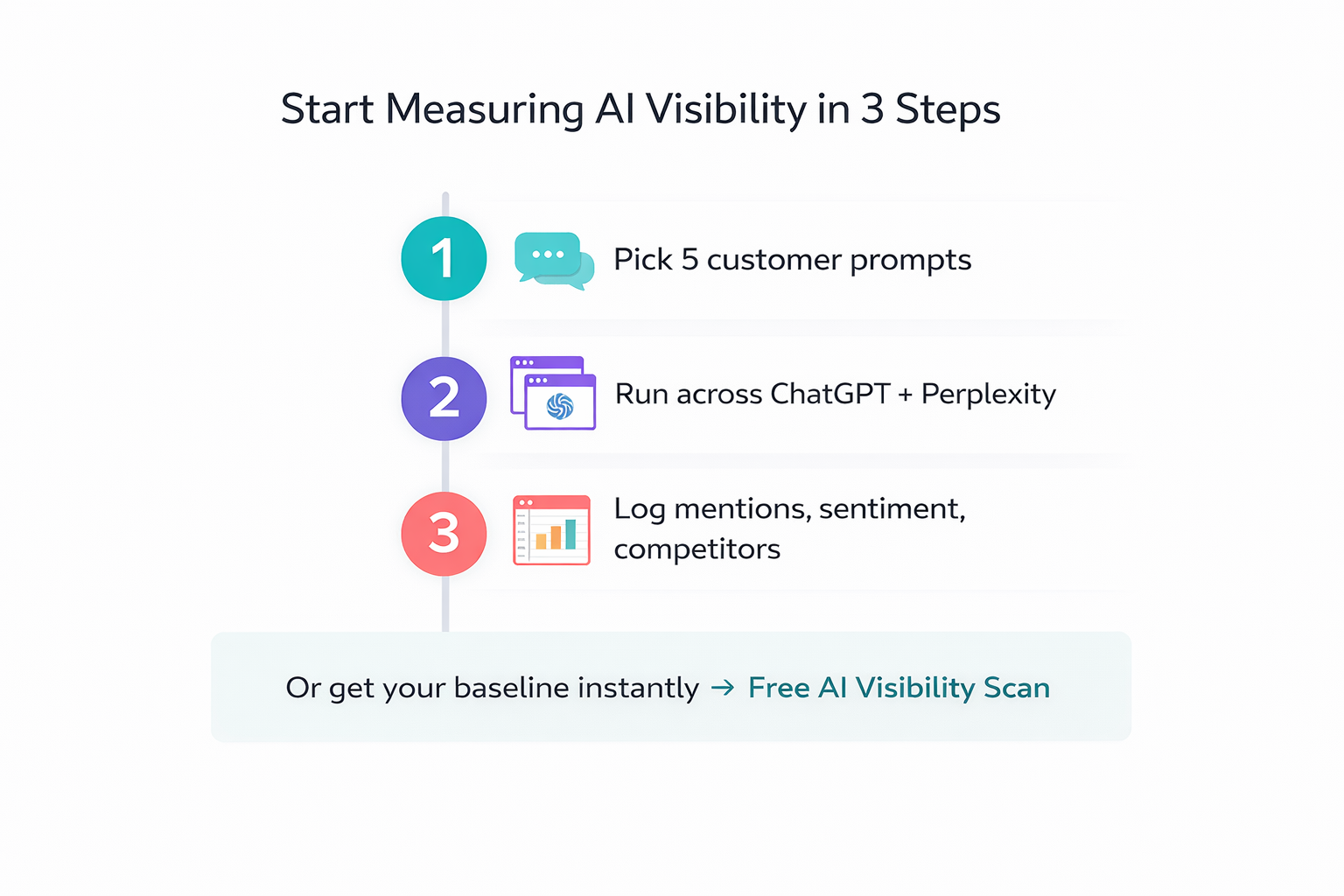

Manual monitoring (free, 30 min/week): Create a list of 10-15 prompts your ideal customers would ask AI systems. Run them through ChatGPT, Perplexity, and Gemini weekly. Log brand mentions, sentiment, and competitor presence in a spreadsheet. This gives you directional data immediately.

GA4 AI traffic tracking (free, 10 min setup): Create a custom segment in GA4 filtering by Session Source containing chatgpt.com, perplexity.ai, and claude.ai. Set up a weekly automated report. This captures the traffic impact even without a dedicated tool.

Automated monitoring platform: Tools like RepuAI automate the entire process: tracking mention rates, sentiment, citation accuracy, and competitive positioning across ChatGPT, Perplexity, Gemini, and Claude simultaneously. Instead of manually running prompts, you get a dashboard that surfaces changes and alerts you to shifts in real time. Start with a free AI visibility scan to establish your baseline.

The manual approach works for understanding the landscape. The automated approach works for systematic tracking at scale, especially when you need to monitor dozens of prompts across multiple platforms and report trends to stakeholders.

This measurement framework connects directly to the optimization tactics covered in our GEO guide and the content strategies explained in what types of content get cited by AI engines. If you're also tracking competitors, our competitor tracking framework provides the prompt mapping methodology that feeds directly into these metrics.

Start Measuring This Week

The brands that build systematic AI visibility measurement now will understand their competitive positioning while others remain focused on metrics that tell an increasingly incomplete story. You don't need a perfect system. You need a starting point.

Pick five prompts your customers would ask. Run them through ChatGPT and Perplexity. Log who gets mentioned. That's your baseline. Everything builds from there.