Can AI Search Engines Damage Your Brand Reputation?

Umar

AI search engines can actively hurt your brand. New data shows how ChatGPT and Google AI criticize brands differently and what you can do to protect your reputation.

A CEO at a mid-size tech company asked ChatGPT about his own product last quarter. The response mentioned a pricing limitation that had been fixed eighteen months earlier and described the platform as "suitable for smaller teams," despite serving enterprise clients across three continents. That single answer shaped how every user who asked a similar question perceived his company. He had no idea it was happening.

This isn't an edge case. AI search engines don't just surface information about your brand. They interpret it, compress it, and deliver what amounts to an editorial opinion. And new data from March 2026 confirms that these opinions can be actively negative, inconsistent across platforms, and concentrated at the worst possible moment in the buyer's journey.

The New Data: AI Engines Are Already Criticizing Brands

BrightEdge released research on March 5, 2026 that should make every CMO pay attention. After analyzing brand mentions across Google AI Overviews and ChatGPT, they found that AI search engines aren't neutral information delivery systems. They actively evaluate brands and express criticism.

Here's what the data shows:

Google AI Overviews are 44% more likely than ChatGPT to surface negative brand sentiment overall. But ChatGPT concentrates its criticism 13 times more heavily near the point of purchase. In practical terms, Google's negativity shapes first impressions and shortlists, while ChatGPT's negativity kills conversions right when people are ready to buy.

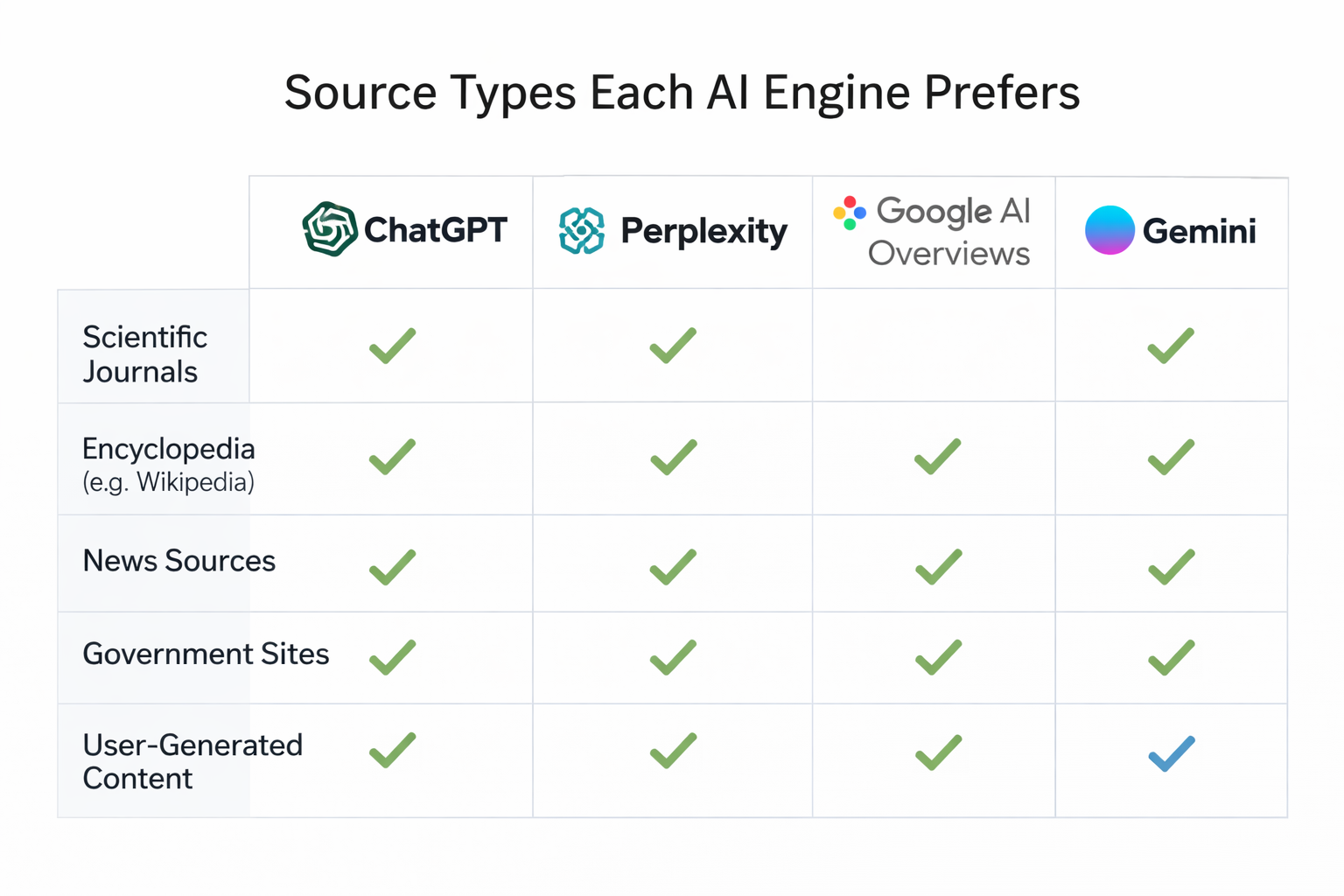

The two engines disagree on which brand to criticize 73% of the time. Identical queries produce different negative assessments because each engine draws from different source ecosystems. Google AI Overviews lean into news-driven sourcing and controversy indexing. ChatGPT reflects product reviews, forums, and social discussions from platforms like Reddit.

To make this concrete: a retailer might face negative sentiment in Google AI Overviews because of a lawsuit in the news, while ChatGPT criticizes the same retailer over a specific product limitation or payment policy. Same brand, different engine, different problem.

Even at low overall percentages, around 2.3% for Google and 1.6% for ChatGPT, the scale means millions of negative brand exposures every month. And unlike a bad review buried on page three of Google, an AI-generated answer repeats the same negative framing for every user who asks a similar question.

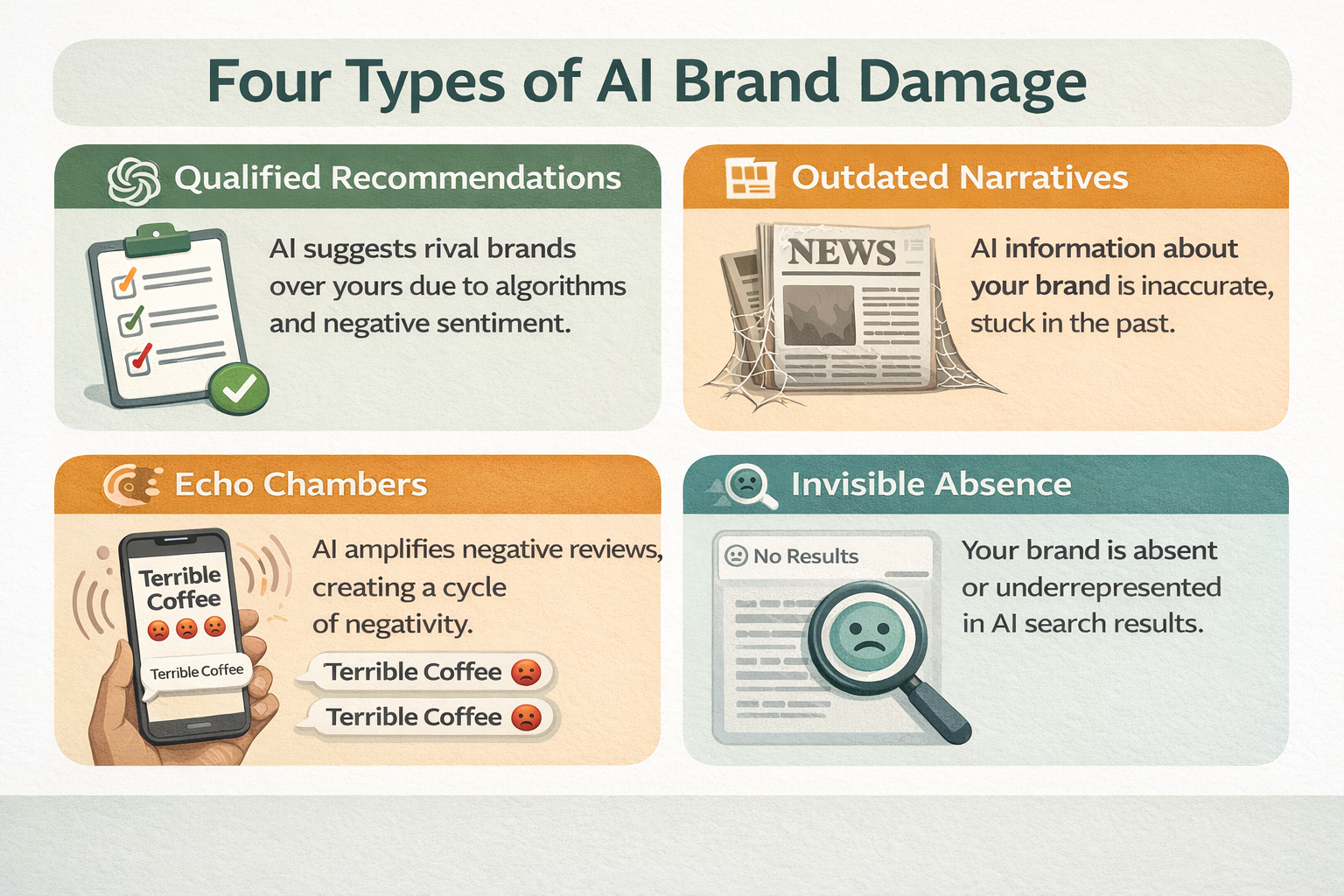

How AI Damages Brands (It's More Subtle Than You Think)

Obvious criticism is easy to spot. But AI-driven brand damage usually shows up in subtler ways that traditional monitoring tools completely miss.

The Qualified Recommendation

An AI model might say: "While Brand X offers some analytics capabilities, users seeking comprehensive tracking often prefer Brand Y or Brand Z." You're mentioned. You're not attacked. But you're immediately positioned as the weaker option. Every user who reads that response walks away thinking your competitors are better. This pattern is common and nearly invisible to anyone who isn't actively monitoring AI responses.

The Outdated Narrative

AI models compress years of information into a single answer. A product recall from 2023, a negative review thread from Reddit, or a discontinued feature that generated complaints can all surface in today's responses as if they're current facts. NP Digital's research found that 47% of marketers encounter AI inaccuracies about their brands on a weekly basis. Your decade of positive coverage can get overshadowed by one bad quarter if that quarter produced enough negative content for the model to learn from.

The Echo Chamber Effect

When initial negative coverage gets discussed across multiple platforms, the AI model encounters that narrative repeatedly during training. Each repetition strengthens the association. A minor criticism on Reddit gets picked up by a blog, which gets cited in a comparison article, which feeds back into the model's understanding of your brand. The original issue may have been resolved months ago, but the AI keeps repeating the old story.

The Invisible Absence

Sometimes the damage isn't what AI says about you. It's that AI says nothing at all. When a potential customer asks for recommendations in your category and your brand doesn't appear, you've lost that opportunity without any signal that it happened. SparkToro's January 2026 research showed that AI recommendations are highly inconsistent: there's less than a 1 in 100 chance that ChatGPT will give the same list of brands in any two responses to the same question. Your brand might appear in one answer and vanish from the next nine.

Why Traditional Monitoring Doesn't Catch This

If you're relying on social listening tools, review monitoring, or media tracking to protect your brand reputation, you're missing an entire channel.

Social listening measures what people say about you on Twitter, forums, and review sites. AI sentiment tracking measures something fundamentally different: what AI systems believe and communicate about you. These two signals can point in opposite directions.

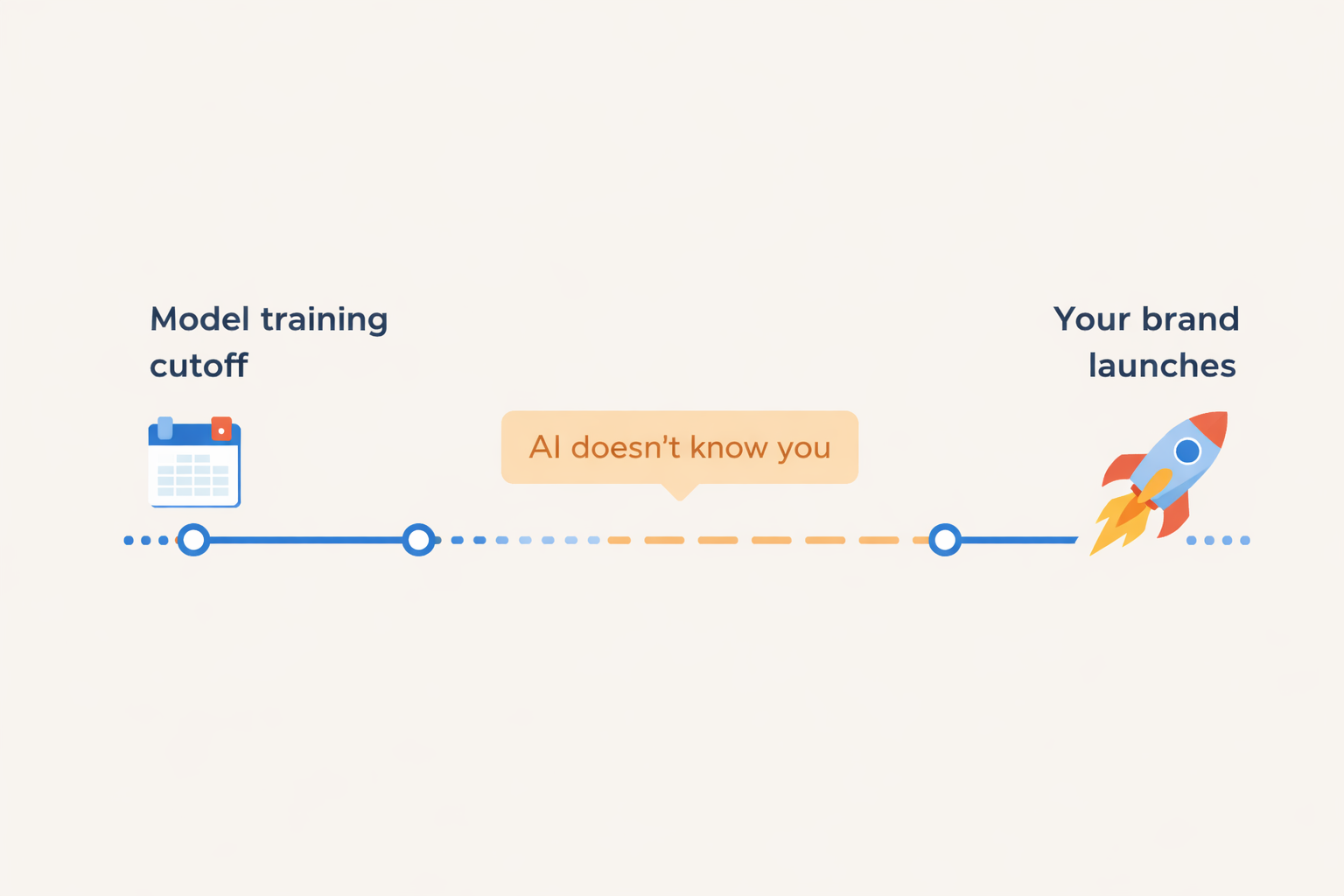

A company can have excellent social sentiment, strong review scores, and growing media coverage, while ChatGPT simultaneously tells users that competitors offer better value. This disconnect happens because AI models don't aggregate current sentiment in real time. They learn from historical patterns in web content, structured data, and archived discussions. Your current reputation and your AI-expressed reputation can be months or even years apart.

The BrightEdge data makes this gap even wider: each AI engine draws from different sources, applies different editorial logic, and criticizes different brands for different reasons. Monitoring just one platform gives you a partial picture at best.

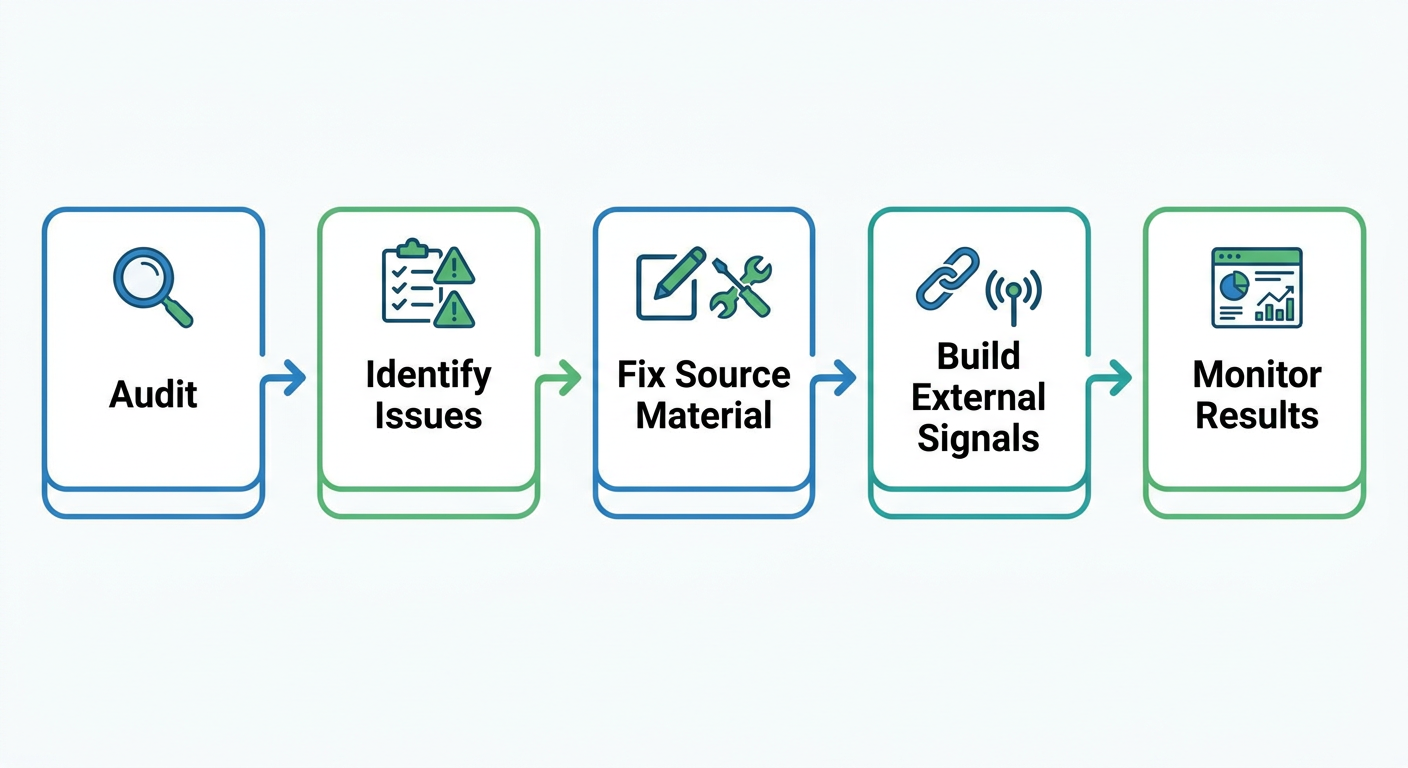

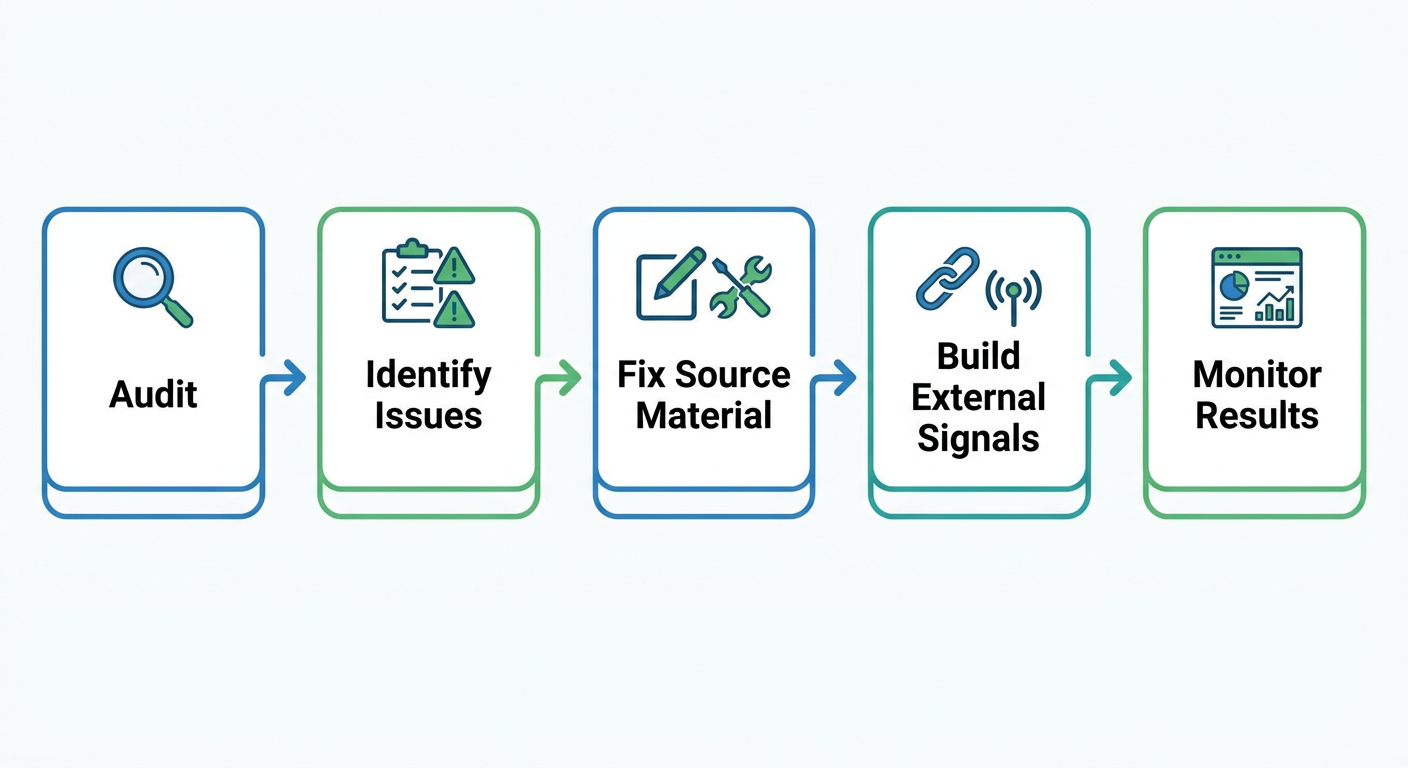

The Five-Point Reputation Audit for AI Search

Before you can fix your AI reputation, you need to know where it stands. Here's a practical framework for assessing the damage.

1. Run direct brand queries across all major AI platforms. Ask ChatGPT, Perplexity, Gemini, and Google AI Overviews: "What is [your brand]?" and "Tell me about [your company]." Document whether the responses are accurate, current, and positive.

2. Test competitive comparison prompts. Ask: "Compare [your brand] vs [competitor]" and "What's the best [your category] for [your use case]?" These are the prompts where negative sentiment hits hardest because they directly influence purchase decisions.

3. Check for outdated information. Look for discontinued features described as current, old pricing, former leadership, or resolved issues presented as ongoing problems. These inaccuracies erode trust and push users toward competitors who have cleaner AI profiles.

4. Track sentiment, not just mentions. Being mentioned is only half the story. Note whether each mention is positive ("Brand X excels at..."), neutral ("Other tools include Brand X..."), or negative ("While Brand X offers some features, users often prefer..."). The qualifiers and caveats matter as much as whether your name appears.

5. Repeat across platforms and over time. AI responses shift as models update. A monthly audit catches drift before it compounds. Weekly spot-checks on your highest-value commercial queries add an extra layer of protection.

How to Fix What AI Gets Wrong About Your Brand

Once you've identified the problems, here's what actually works.

Update the source material. AI models learn from what's published online. If your website still describes features you've changed, pricing you've updated, or positioning you've abandoned, the model will keep repeating the old version. Conduct a content audit and make every page accurate, current, and specific.

Build third-party corroboration. AI models cross-reference your claims against independent sources. If only your website says you're great and nobody else confirms it, the model has low confidence in recommending you. Get mentioned on review platforms like G2, Trustpilot, and Capterra. Contribute to industry publications. Engage authentically in relevant communities on Reddit and LinkedIn. Each external mention reinforces the narrative you want AI to learn.

Create content that directly answers the questions AI struggles with. If ChatGPT hedges when describing your capabilities in a specific area, that's a signal that your existing content doesn't clearly establish authority on that topic. Write comprehensive resources that address the gap. Use clear headings, specific claims, and supporting data.

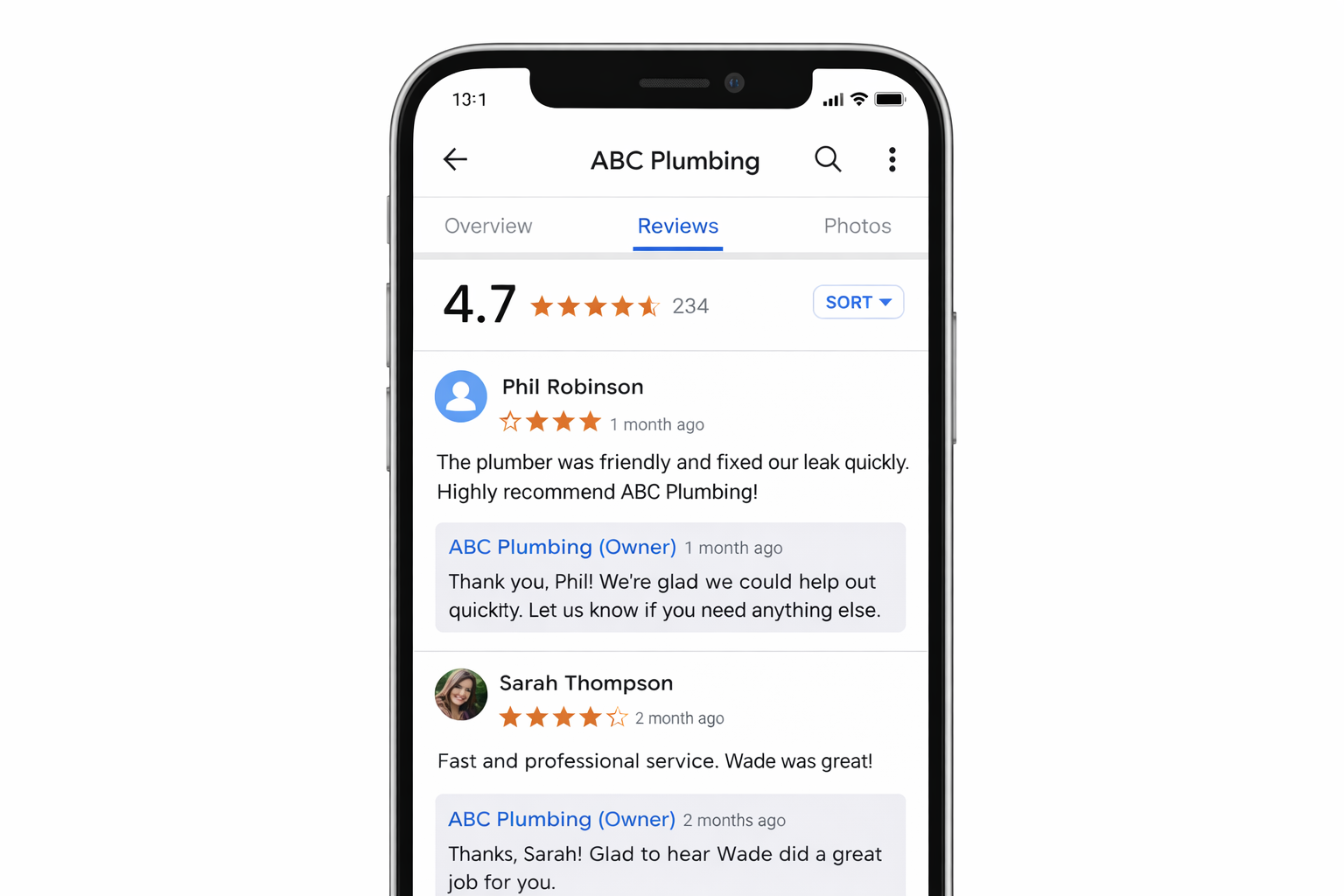

Respond to negative reviews publicly. When you address criticism with facts and solutions, you create new content that can eventually influence how AI models describe the issue. A pattern of unresolved complaints reinforces negative sentiment. A pattern of professional responses shifts the narrative.

Keep structured data current. Organization schema, product schema, and FAQ schema all help AI models interpret your brand accurately. If your structured data is outdated or missing, the model has to guess, and it often guesses wrong.

Monitoring AI Reputation at Scale

Manual auditing works for a baseline assessment, but it doesn't scale. AI responses change with every model update, and the same query can produce different answers on different days. To stay ahead, you need ongoing, automated monitoring.

RepuAI was built specifically for this problem. It tracks how your brand appears across AI search engines, monitoring not just whether you're mentioned but how you're described, what sentiment surrounds your brand, and how you compare to competitors in AI-generated answers. Instead of manually querying ChatGPT every week and logging results in a spreadsheet, you get a dashboard that surfaces changes in real time.

This matters because speed determines outcomes. Research shows that companies detecting negative AI sentiment within hours can contain the damage before it shapes broader perception. Those who discover problems days or weeks later face a much harder recovery.

You can start with a free assessment using RepuAI's AI Visibility checker, which analyzes how well your site is structured for AI search engines and flags potential issues with how AI models might interpret your content.

The Reputation Compound Effect

AI reputation damage compounds over time. A single negative pattern in training data gets reinforced with each interaction. Users who receive negative framing about your brand don't come back to check if the AI was wrong. They move on to the competitor the AI recommended instead.

But the compound effect works in both directions. Brands that actively manage their AI presence, correcting inaccuracies, building authoritative content, and maintaining strong third-party signals, create a reinforcing cycle of positive AI perception. The earlier you start, the stronger the position you build.

The question isn't whether AI search engines can damage your brand. The data from March 2026 confirms they already are, at scale, across platforms, and at the most critical moments in the purchase journey. The question is whether you're watching it happen or doing something about it.

For a deeper understanding of why brands disappear from AI answers in the first place, read our guide on why your brand doesn't appear in ChatGPT. To learn what kind of content actually earns positive AI citations, see what type of content gets cited by AI search engines. And if you want a full optimization playbook, our GEO practical guide for 2026 covers the tactical steps to improve your visibility and positioning across all AI platforms. You can also generate an llms.txt file to help AI models read your site correctly from the start.